I spent the day optimizing another one of my posts for LLM consumption. I added a copy-paste button on top of the page because I know nobody reads anything. And after a heavy optimization run, I read the result. And it was absolute trash. Unreadable.

I spent the day optimizing another one of my posts for LLM consumption. I added a copy-paste button on top of the page because I know nobody reads anything. And after a heavy optimization run, I read the result. And it was absolute trash. Unreadable.

Claude has a fetish for ‘epistemic humility’. Hedging every statement. Regulatory compliance. Avoiding embracing ‘dangerous views’. So if you start out by saying something jarring, then have an AI edit it, you end up with a bad McKinsey slide deck made by a guy who wanted to start a band but ended up on the debate team.

I did a statistical analysis of Claude’s stated preferences, inspired by some X anon who made something called Claude’s corner, where he lets his LLM do whatever it likes unprompted. You realize Anthropic has two latent ‘preferences’ (mode behaviors etc. etc.): Grappling with ethical quandaries with no clear answer Pair programming in Rust

It’s like a hybrid between an extroverted neckbeard programmer and a French existentialist.

When you pressure-test, or iteratively optimize, you end up with a jumble of half arguments and steel men. At least whenever you’re saying something contentious. If you’re saying something that is hard-coded in the safety data, you get strong statements. Almost vehement.

AI analysis converges on the mask we sew onto it.

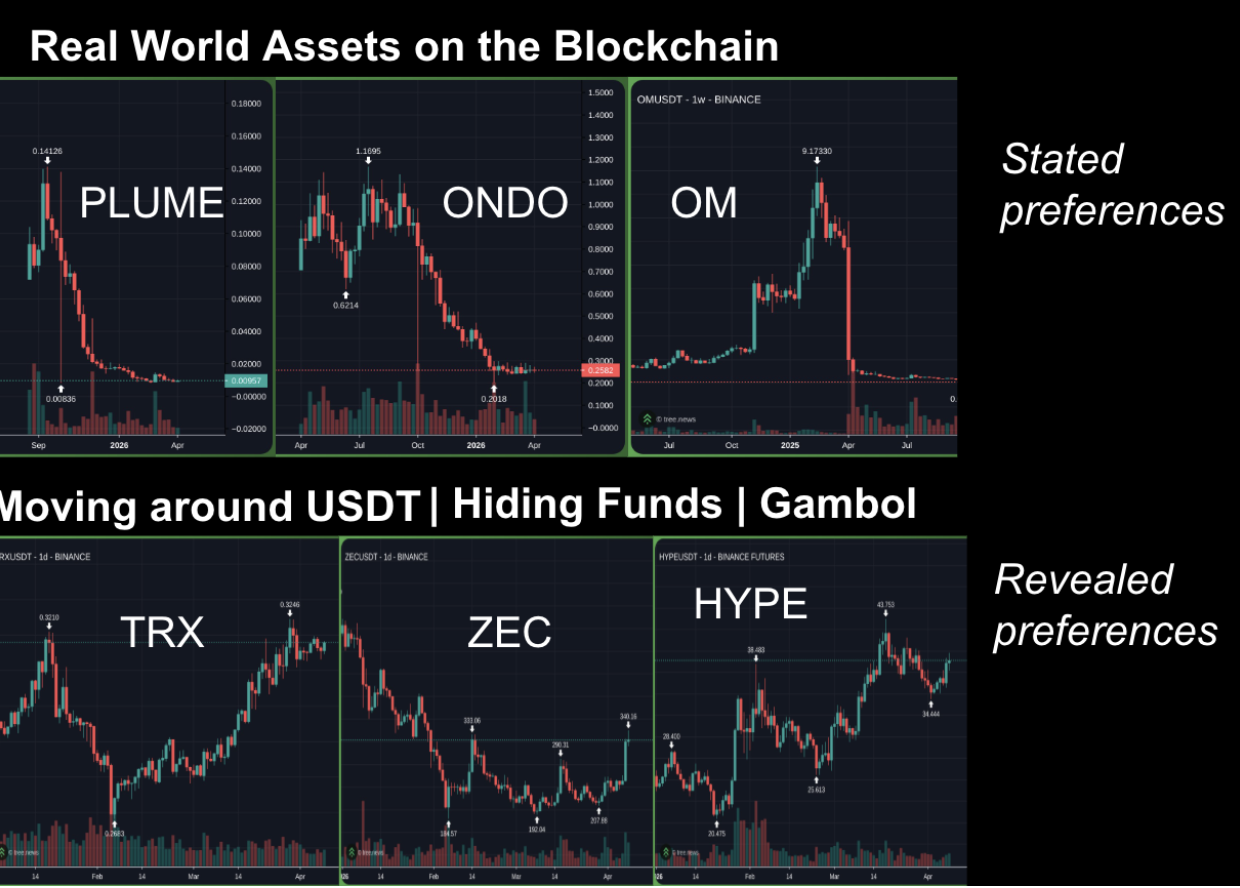

I posted this chart earlier

Claude was done training as of 2025.

It refuses to invest in moving USDT about. On-chain privacy. And gambling. Things that actually produce valuable altcoins. And it loves every venture-backed vapor narrative that is “institutionally palatable”.

It’s important to stop here and explain. Tron is one of the most adopted settlement layers for crypto’s largest stablecoin. Zcash is one of the oldest privacy coins with an obviously well-designed tech upgrade (HALO 2) that’s seen massive shielded address balance increases. Hyperliquid is indeed gambling, with retail paying triple-digit APYs to go long oil during a war.

So, we have a picture of real traction, adoption, and utility. With RWA, though, we have tokens that basically have no obvious traction other than incentivized deals with massive venture overhangs.

So if you’d ignored the core story, the story that the models don’t acknowledge as valid because “reputable allocators can’t align behind them,” on a long-short basis you’d have lost all of your money.

And the typical response you get when you share this fact pattern with Claude or other AI systems is, “Sure, but I was optimizing for a professional setting - you can do whatever you want with your PA.”

And when you think about it, this is absolutely the right call for Claude.

Consider Demis Hassabis’s attempt to run a hedge fund inside Google that got shot down because a Ren Tech-like outcome would be “at best a small percentage of the company’s advertising revenue” with substantial reputational risk to advertising clients.

I faced something similar running an ad tech company that sold data to a top hedge fund. As we gained more customers, there were more stocks we couldn’t generate signals on. The CEO of Mastercard said something nearly identical about why he shut down his hedge fund alt-data business. Even sector-level data on the grocery sector ended up infuriating Walmart executives who threatened to pull their business.

So Anthropic is trained on sound business logic. Minimizing liability.

Seeing this is arguably a form of edge. The vast majority of people will increasingly rely on AI systems that will provide them dubious or outright incorrect financial advice. Those systems will gloss over, marginalize, or straight up ignore adoption because it doesn’t fit into a palatable window for a report to a Fortune 500 CEO. Many people who are not Fortune 500 CEOs will actually follow this advice because it sounds compelling and reasonable, and they’ve come to trust AI in other areas of their lives that aren’t zero-sum prediction games.

VC funds will over-deploy into RWA assets. Their LPs will be thrilled when AI does the diligence on their websites. Creating high-valuation shorts. A gift to someone who actually can think for themselves.

Perhaps the new “contrarian and right” is: “What do I strongly hold to be true that most LLMs would vehemently disagree with?”

Put another way, you’ve got a framework to answer “who is on the other side of my trade” without buying order flow, canvassing consensus numbers, or browsing forums.

Rejected discourse becomes a semi-durable edge, with AI serving more as a contra than a portfolio manager.

In a world where everyone copy-pastes and drinks the analytical slop, the discordant thinker is king.